CZI Learning Commons

Ages 5-17 · free · AI Product · learningcommons.org ↗

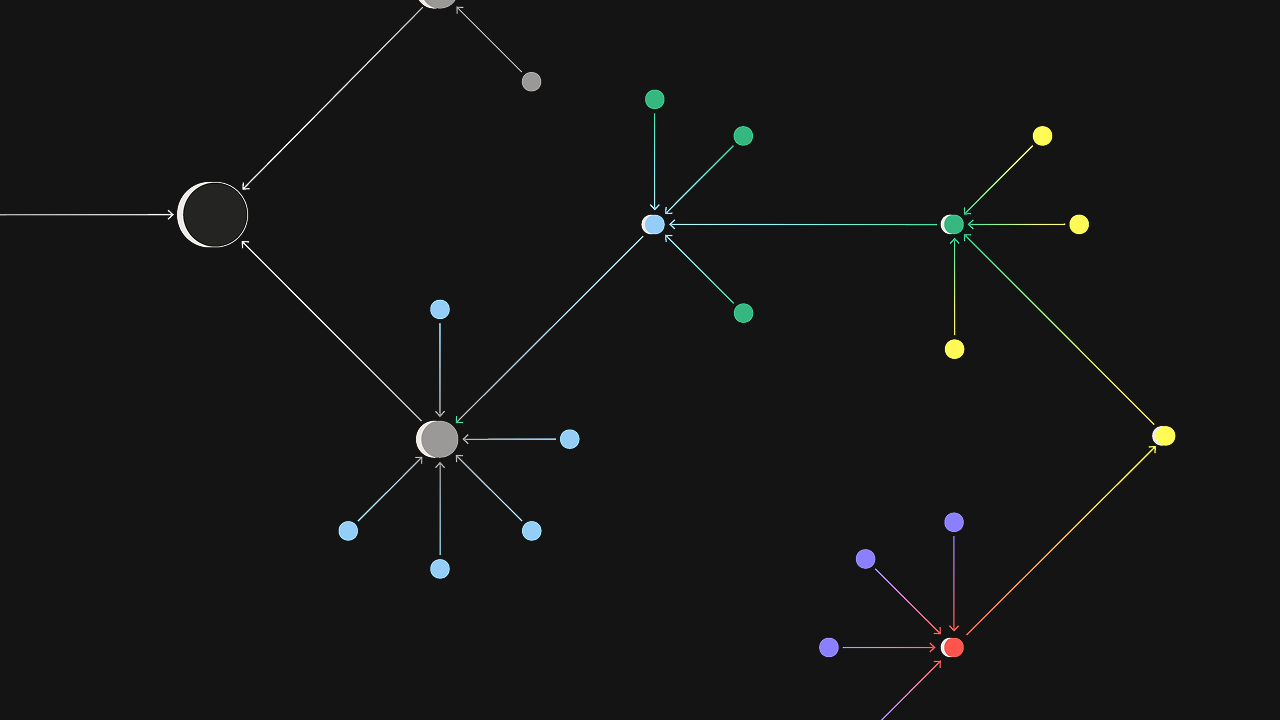

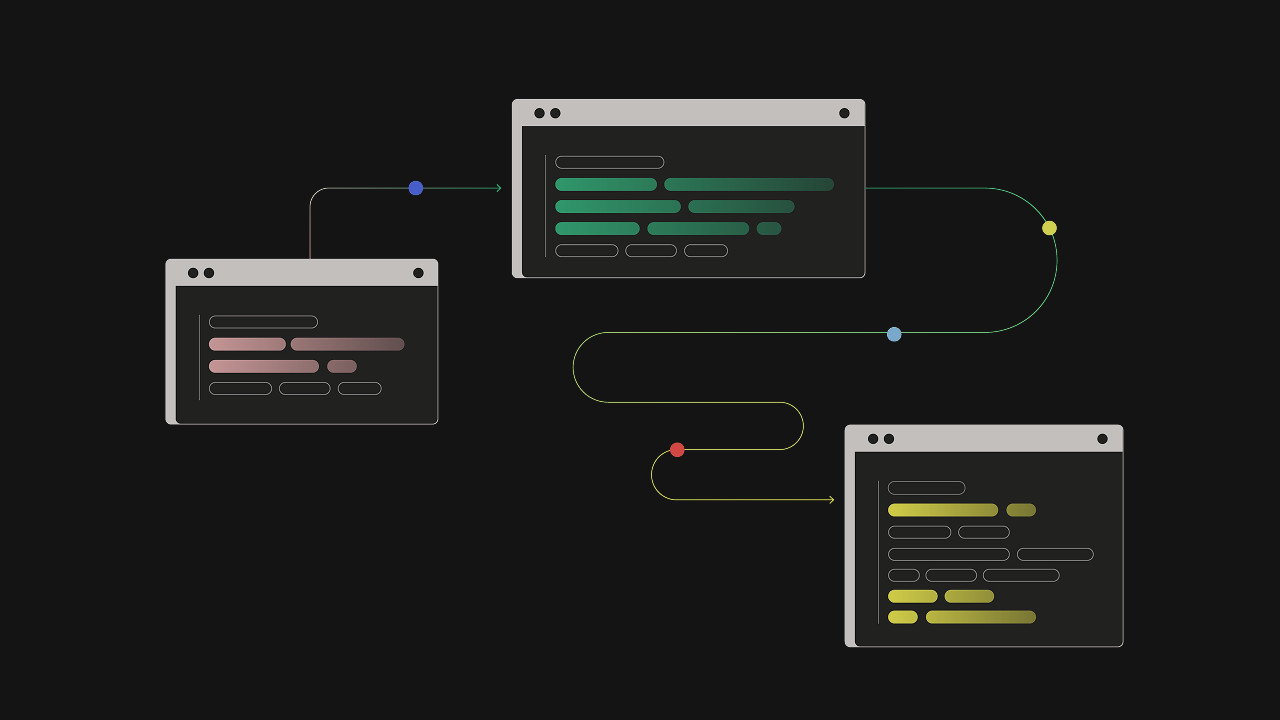

Learning Commons is public infrastructure for people building education technology. Its main products are Knowledge Graph, which links standards, curriculum, and learning science data, and Evaluators, which score AI-generated educational content for quality and grade fit. It is closer to a shared data and quality layer than to a student-facing learning app.

We've reviewed CZI Learning Commons against our 9-literacy developmental framework. The main growth opportunity: Learning Commons is too indirect to score richly under a child-centered rubric.

Strengths & gaps

Strengths

- ● Learning Commons is serious public infrastructure. Knowledge Graph and Evaluators are designed to improve the quality of AI educational tools rather than just increase output.

- ● Learning Commons seems anchored in learning science and standards alignment. That gives it more pedagogical substance than generic AI tooling.

- ● Learning Commons’ strongest developmental link is judgment. Better evaluators and better grounding can help downstream tools make more defensible educational decisions.

Gaps

- ○ Learning Commons is too indirect to score richly under a child-centered rubric. Most capacity effects depend on the products built on top of it.

- ○ Learning Commons does not provide enough direct learner behavior evidence in this scope.

- ○ Learning Commons would need a narrower learner-facing product scope for a fuller NL profile.

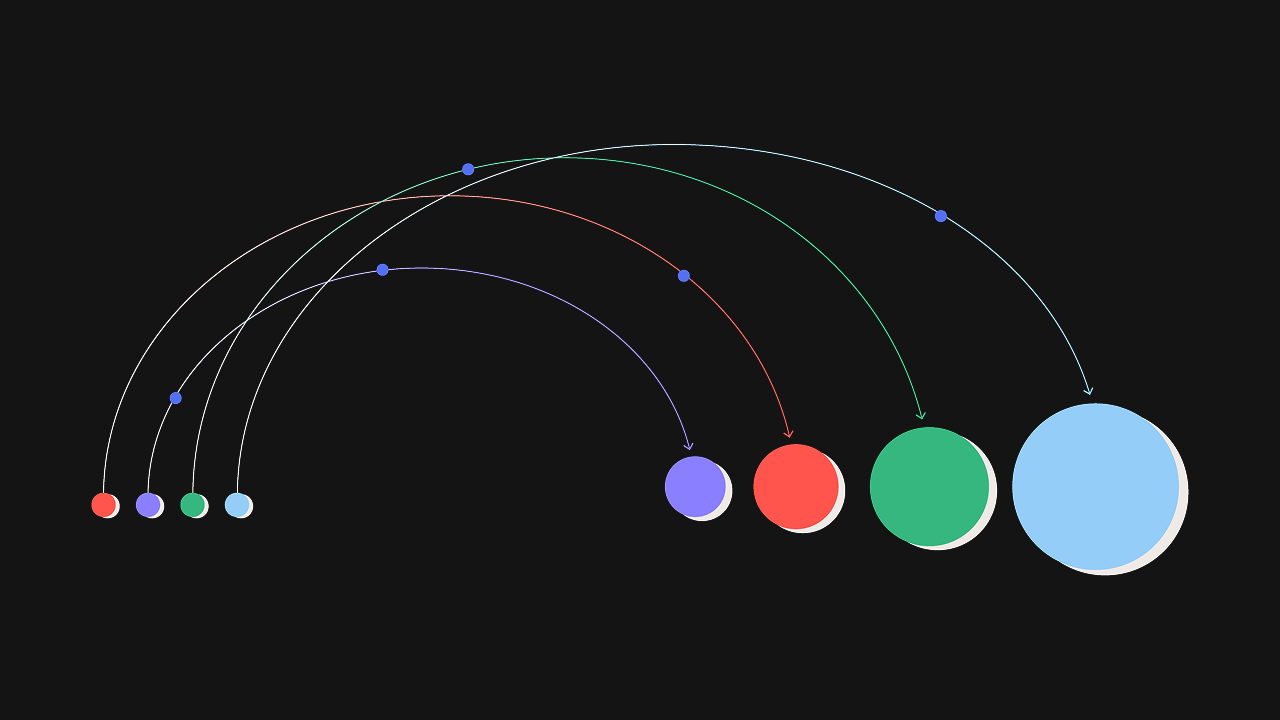

Detailed scores

How CZI Learning Commons performs on each of the 9 literacies in our framework.

Doing

— 0 of 3 Strong

Learning Commons is designed for developers and educators, not children. There is no direct child interaction surface here to score for agency. Not Assessed is the right call.

The corpus does not show students persisting through challenge in Learning Commons itself. It may support other tools that do. That is not enough for a direct persistence score.

Knowledge Graph and Evaluators may help other products adapt better to learners. But the current package is infrastructure, not an observed child adaptability exercise. Adaptability remains Not Assessed.

Thinking

— 0 of 3 Strong

There is no direct evidence of children exploring, questioning, or following interests through Learning Commons itself. Curiosity is too indirect to score.

This package covers datasets and evaluators, not a child-facing creative tool. Creativity is outside the scoped experience and remains Not Assessed.

If Learning Commons contributes anywhere on this rubric, it is judgment. The whole project is built around better standards alignment, better AI evaluation, and more rigorous educational decisions. That is still indirect, but it is the clearest downstream developmental affordance in the source set.

Being

— 0 of 3 Strong

The available sources do not show Learning Commons structuring relationships, collaboration, or belonging for children directly. Connection remains Not Assessed.

Nothing in the corpus shows children practicing attention management or emotional regulation through the product itself. Self-Regulation stays Not Assessed.

Learning Commons has a public-good mission. But that is not the same as children practicing purpose through the product. Purpose remains Not Assessed.

Based on 6 sources

- Product learningcommons.org

- Product learningcommons.org — about us

- Product learningcommons.org — scaling proven learning practices

- Product docs.learningcommons.org — about knowledge graph

- Product docs.learningcommons.org — about evaluators

- Product learningcommons.org — knowledge graph launches new features

Reviewed by New Literacies

Scored by our research-derived framework · AI-assisted analysis with editorial review · 6 sources reviewed · Our methodology →

Personalization bridge

Not sure what your kid needs most?

Take the quiz to see which literacies matter most for your family, then get practical things to try at home.

Get your family profile